Sarvam-105B is a monumental achievement in the 2026 AI landscape, serving as India’s first large-scale sovereign foundation model.

Introduction

For a nation as linguistically and culturally diverse as India, relying on foreign “digital brains” poses a risk to both data sovereignty and cultural nuance. Sarvam-105B is the solution to this challenge—a powerful, homegrown LLM designed to reflect the context of 1.4 billion people. Developed by Bengaluru-based startup Sarvam AI with hardware support from NVIDIA and Yotta, this model is the flagship of the IndiaAI Mission. It isn’t just a chatbot; it’s the foundation for a new era of Aatmanirbharta (Self-Reliance) in technology. By training on local datasets and optimizing for Indian infrastructure, Sarvam-105B offers a secure, high-performance alternative to global models, ensuring that India’s data stays under Indian laws while powering everything from government grievance portals to advanced software engineering.

Sovereign AI Flagship

128k Context Window

22 Indian Languages

MoE Architecture

Review

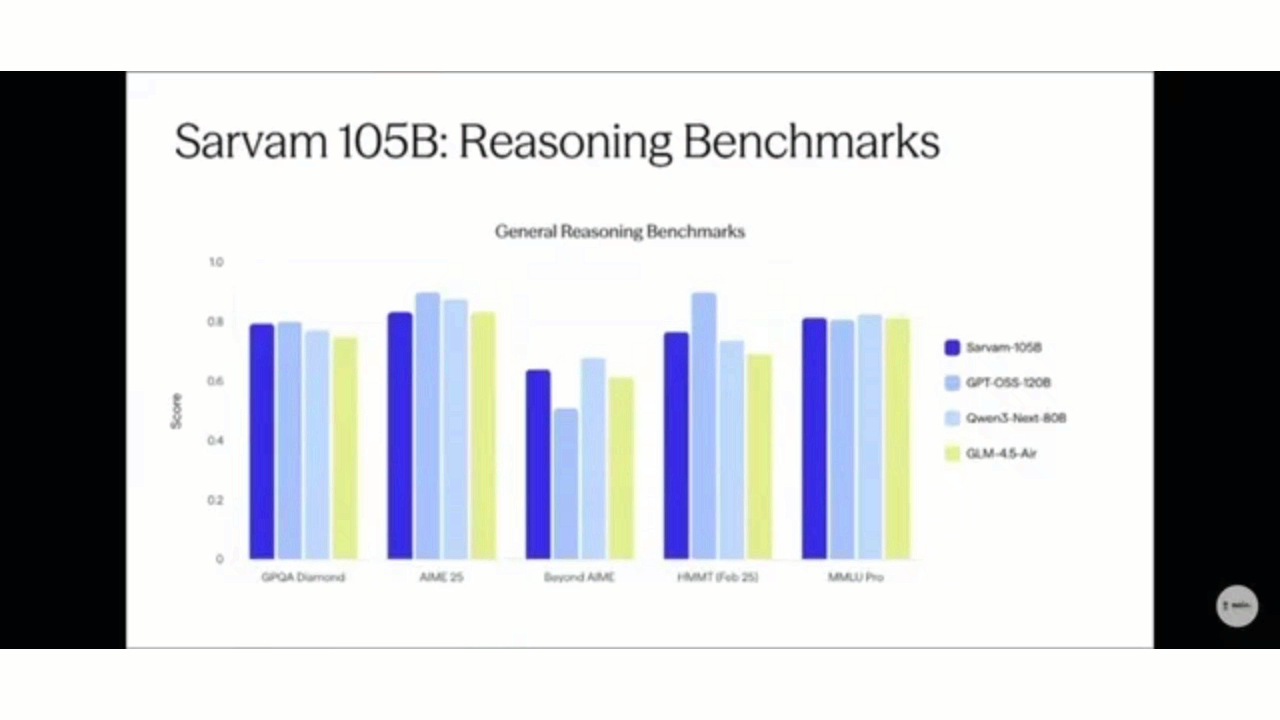

Sarvam-105B is a monumental achievement in the 2026 AI landscape, serving as India’s first large-scale sovereign foundation model. Launched in February 2026 under the government’s IndiaAI Mission, this 105-billion parameter model was trained entirely from scratch on domestic compute infrastructure. Unlike earlier efforts that fine-tuned foreign models, Sarvam-105B represents a “ground-up” approach, utilizing a Mixture-of-Experts (MoE) architecture that activates only ~9 billion parameters per token, making it both highly efficient and environmentally sustainable.

The model is specifically engineered for complex reasoning, coding, and enterprise-grade workflows. It features a massive 128,000-token context window, allowing it to digest entire legal filings or financial reports in a single prompt. Benchmarks show it outperforming models six times its size, including certain evaluations where it surpassed China’s DeepSeek-R1. While global giants like Llama 3.1 are larger in raw parameter count, Sarvam-105B’s competitive edge lies in its native understanding of all 22 official Indian languages and code-mixed formats like “Hinglish,” making it the definitive choice for Bharat-scale deployment.

Features

Mixture-of-Experts (MoE) Design

Activates only a fraction of its total "brain" (9B out of 105B parameters) for any given task, drastically reducing inference costs and power usage.

Massive Context Window

Supports up to 128,000 tokens, enabling the analysis of long documents like balance sheets, technical manuals, and case laws.

Native Multilingual Support

High-accuracy reasoning across all 22 official Indian languages and seamless handling of code-mixed language (e.g., Hindi mixed with English).

Agentic Workflow Ready

Optimized for complex, multi-step reasoning tasks such as legacy code migration and automated customer resolution.

Sovereign Data Security

Training and deployment happen entirely within Indian jurisdiction, simplifying compliance with the DPDP Act.

Indus App Integration

The beta version of 105B is accessible via the Indus app on iOS, Play Store, and web, serving as a "desi" rival to ChatGPT.

Best Suited for

Enterprise Software Engineering

Automating bug fixes, test generation, and legacy code analysis at scale.

Legal & Compliance Firms

Summarizing thousands of pages of legal filings and checking contracts for compliance in local languages.

Government Agencies

Powering citizen query resolution and scheme eligibility checking through voice-first agents.

Banking & Finance

Analyzing complex financial statements and automating multilingual customer support for mass-market reach.

Healthcare Providers

Triage and medical record summarization in regional dialects for low-literacy users.

Agricultural Advisors

Providing pest identification and crop guidance in native languages to farmers.

Strengths

Optimized for India

Computational Efficiency

Independence from Foreign

Strong Reasoning Power

Weakness

Still Scaling

Deployment Complexity

Getting Started with Sarvam-105B: Step-by-Step Guide

Step 1: Access the Model

Download the Indus app from the Google Play Store or Apple iOS Store, or visit the Sarvam AI web portal to start using the 105B beta.

Step 2: Initialize via API

For developers, sign up on the Sarvam Cloud to get your API keys. Every new account starts with ₹1,000 in free credits.

Step 3: Choose Your Deployment

Decide between Sarvam Cloud (fastest setup), Private Cloud (for secure perimeters), or On-Premises (air-gapped for regulated industries).

Step 4: Prompt in Your Language

Enter prompts in any of the 22 Indian languages. For enterprise tasks, upload a long PDF (up to 128k tokens) to the context window for analysis.

Step 5: Build Grounding Layers

If building a domain-specific app (like legal or medical), integrate your private data using RAG to ensure the model’s probabilistic engine stays “grounded” in facts.

Frequently Asked Questions

Q: Is Sarvam-105B open source?

A: Yes. Sarvam AI has released the model weights and inference code under a permissive license to empower developers and researchers.

Q: Can I use it for "Hinglish"?

A: Absolutely. One of Sarvam’s core strengths is its ability to interpret code-mixed languages that foreign models often struggle with.

Q: What is the "Indus" app?

A: Indus is the consumer-facing chatbot app launched by Sarvam AI that uses the 105B model as its engine.

Pricing

Sarvam AI offers transparent pay-per-use pricing. The Sarvam-M chat model is currently offered at no charge to encourage ecosystem growth.

| Plan | Monthly Cost | Credits / Limit | Key Features |

| Starter | Pay as you go | 60 requests/min | ₹1,000 free credits, community support, ideal for prototyping. |

| Pro | ₹10,000 | 200 requests/min | ₹1,000 bonus credits, email support, ideal for POCs. |

| Business | ₹50,000 | 1,000 requests/min | ₹7,500 bonus credits, Slack + Solutions Engineer support. |

| Enterprise | Custom | Custom | Dedicated rate limits, SSO, and 24/7 priority support. |

Alternatives

BharatGPT

A rival Indian initiative focusing heavily on cultural alignment and specific government use cases.

Llama 3.1 (Meta)

A global leader in open-source AI; larger in scale but lacks the deep multilingual optimization of Sarvam.

DeepSeek-R1 (China)

A massive 600B-parameter model known for high efficiency, though Sarvam-105B has shown superior performance in specific Indian benchmarks.

Share it on social media:

Questions and answers of the customers

There are no questions yet. Be the first to ask a question about this product.