The Microsoft Agent Governance Toolkit, is a high-performance, seven-package open-source system designed to bring control to autonomous AI agents.

Introduction

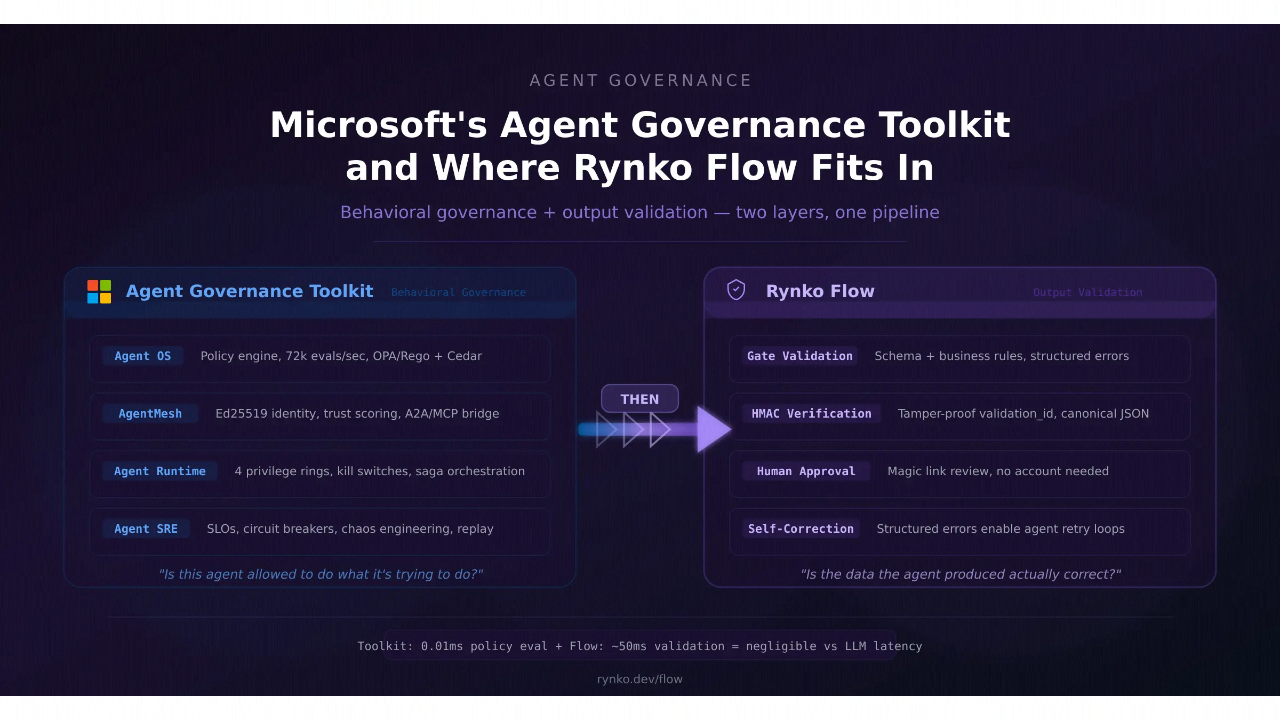

In 2026, the bottleneck for AI isn’t capability; it’s trust. When an agent has the power to pause an ad campaign, update a CRM, or execute code, a single “hallucination” can become a costly operational failure. Microsoft’s Agent Governance Toolkit represents a shift from “if-then” prompts to a robust, runtime-security architecture. By modeling agent behavior on established computing patterns, like CPU privilege rings and service meshes Microsoft has provided a framework-agnostic “shield” that allows agents to operate independently while remaining strictly within corporate guardrails. For developers and IT admins, this toolkit is the missing “instruction manual” for deploying safe, scalable, and compliant autonomous systems.

MIT Licensed

MITRE/OWASP Mapped

<0.1ms Latency

Multi-Language Support

Review

The Microsoft Agent Governance Toolkit, released on April 2, 2026, is a high-performance, seven-package open-source system designed to bring deterministic control to autonomous AI agents. While frameworks like AutoGen and LangChain have made autonomy easy to deploy, they often lacked a standard “governance layer” to prevent agents from taking rogue actions. Microsoft’s toolkit solves this by introducing an Agent OS layer, a stateless policy engine that intercepts agent actions (tool calls, API requests) with a staggering p99 latency of under 0.1 milliseconds, ensuring security doesn’t slow down execution.

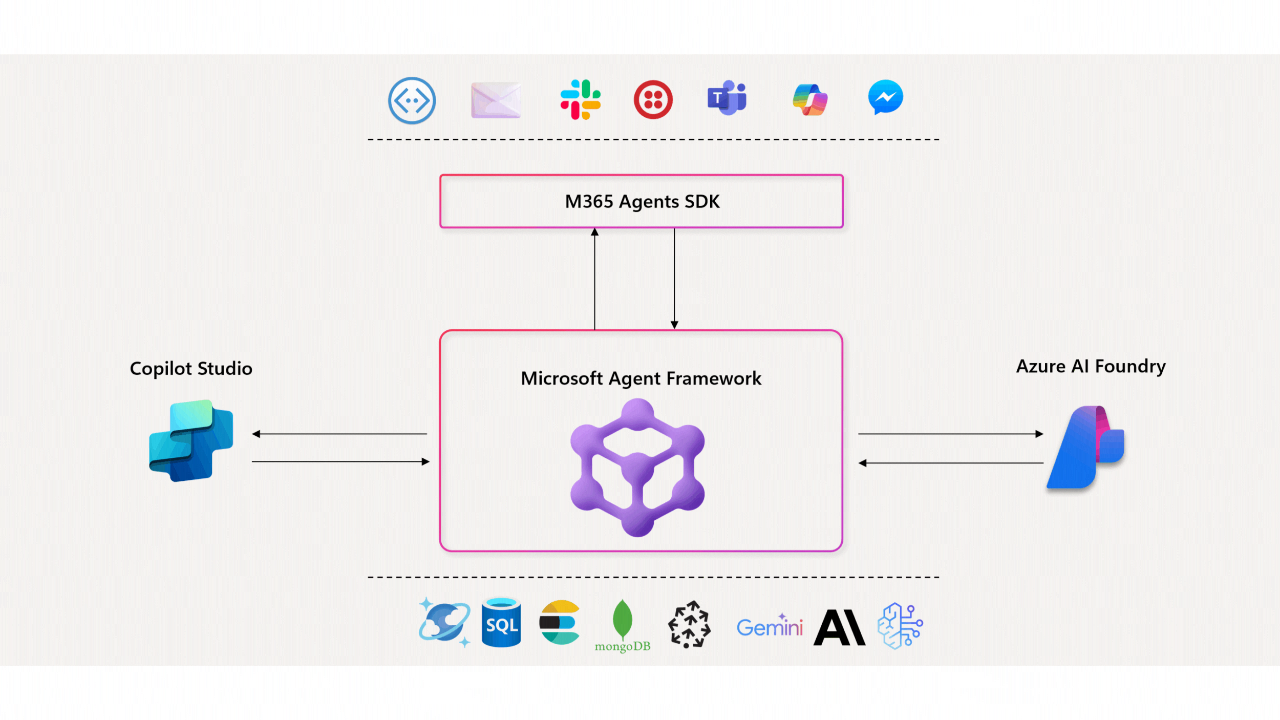

The toolkit is lauded for being the first to address all 10 OWASP agentic AI risks, including goal hijacking and tool misuse, through a “Kernel-style” privilege separation. It is highly versatile, supporting Python, TypeScript, Rust, Go, and .NET, and hooks natively into existing pipelines like Azure AI Foundry Agent Service and CrewAI. While it is not a content-moderation tool (it doesn’t filter “what” an agent says), it is the definitive “action-governance” shield for enterprises moving out of “pilot purgatory” into production-scale agentic workflows.

Features

Agent OS (Policy Engine)

A sub-millisecond interceptor that validates every agent action against YAML, OPA Rego, or Cedar policies before execution.

Agent Mesh (Identity)

Provides cryptographic identities (Decentralized Identifiers) and secure Inter-Agent Trust Protocols for multi-agent systems.

Agent Runtime (Isolation)

Implements 4-tier privilege rings (similar to an OS kernel) to restrict capabilities based on an agent’s verified trust score.

Agent SRE (Reliability)

Applies Site Reliability Engineering principles like circuit breakers, SLO enforcement, and error budgets to prevent cascading failures.

Agent Compliance

Automatically maps agent behavior to global frameworks like the EU AI Act, HIPAA, and SOC2, gathering audit evidence autonomously.

Cross-Model Verification Kernel

Uses majority voting between different LLMs to validate critical agent decisions, mitigating "memory poisoning" or hallucinations.

Best Suited for

Enterprise AI Architects

Designing complex, multi-agent systems that must interact with production databases and APIs.

DevSecOps Teams

Requiring automated audit trails and runtime security for autonomous "Agentic SIEM" or coding workflows.

Compliance Officers

Needing to prove that autonomous agents are operating within the boundaries of the EU AI Act or industry-specific regulations.

Fortune 500 Agencies

Moving out of "experimentation" into high-risk automated actions like financial transactions or infrastructure management.

Cloud-Native Developers

Deploying agents via Azure Kubernetes Service (AKS) or Azure Container Apps using sidecar governance models.

Open-Source Contributors

Seeking a framework-agnostic toolkit that works with LangChain, AutoGen, CrewAI, and LlamaIndex.

Strengths

Zero Performance Tax

Deterministic Control

Complete Risk Coverage

Free & Agnostic

Weakness

Action-Only Focus

Initial Complexity

Getting Started with Agent Governance: Step-by-Step Guide

Step 1: Install the Core Package

Install the specific package for your environment (e.g., pip install agent-governance-os for Python).

Step 2: Define Your Policy

Create a policy.yaml file to define “Allowed” vs. “Blocked” tools. Set up human-in-the-loop approval gates for sensitive actions like delete_database.

Step 3: Secure Your Agent Mesh

Initialize Agent Mesh to give your agent a cryptographic identity. This ensures only verified agents can communicate with each other.

Step 4: Set Up Privilege Rings

Configure the Agent Runtime to place your agent in a restricted ring (Ring 3) until its behavior score improves through successful, policy-compliant tasks.

Step 5: Monitor Compliance

Connect to the Agent Compliance package to start auto-generating reports for the EU AI Act or SOC2 based on real-time agent execution data.

Frequently Asked Questions

Q: Does this stop my agent from hallucinating?

A: Not directly. It stops an agent from acting on a hallucination (e.g., trying to access a tool it shouldn’t) through deterministic policies.

Q: Is it only for Microsoft's agents?

A: No. It is framework-agnostic and works with LangChain, OpenAI Agents SDK, CrewAI, LlamaIndex, and Google ADK.

Q: What is a "Kill Switch"?

A: It is a feature in Agent Runtime that allows an admin or a monitoring agent to instantly terminate a rogue agent’s execution thread.

Q: Can it help with the EU AI Act?

A: Yes. The Agent Compliance package specifically maps agent telemetry to EU AI Act requirements and automates evidence collection.

Pricing

Microsoft has released this as a community-first tool, aiming to standardize the “Governance Plane” of the AI industry.

| Tier | Cost | Key Benefits |

| Community (GitHub) | $0.00 | Full 7-package toolkit, MIT license, and 20+ tutorials. |

| Azure Integrated | Consumption-Based | Sidecar deployment on AKS and seamless logging to Azure Monitor/Purview. |

| M365 Enterprise | Managed Add-on | Direct governance for M365 Copilot Agents via admin control centers. |

Share it on social media:

Questions and answers of the customers

There are no questions yet. Be the first to ask a question about this product.